HyperSQL Database Engine 2.7.4

|

2024-10-25 |

|

Table of Contents

- Preface

- 1. Running and Using HyperSQL

- 2. SQL Language

-

- SQL Standards Support

-

- Definition Statements (DDL and others)

- Data Manipulation Statements (DML)

- Data Query Statements (DQL)

- Calling User Defined Procedures and Functions

- Setting Properties for the Database and the Session

- General Operations on Database

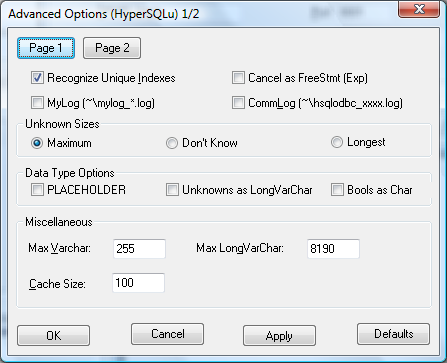

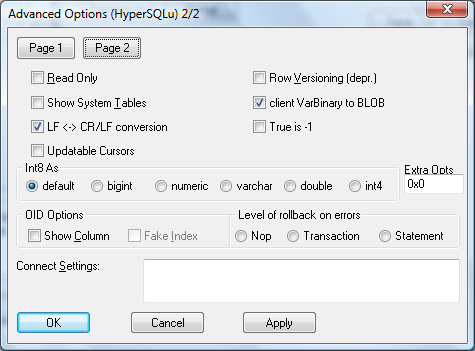

- Transaction Statements

- Comments in Statements

- Statements in SQL Routines

- SQL Data and Tables

- Short Guide to Data Types

- Data Types and Operations

- Datetime types

- Interval Types

- Arrays

- 3. Schemas and Database Objects

-

- Overview

- Schemas and Schema Objects

- Statements for Schema Definition and Manipulation

-

- Common Elements and Statements

- Renaming Objects

- Commenting Objects

- Schema Creation

- Table Creation

- Temporal System-Versioned Tables and SYSTEM_TIME Period

- Table Settings

- Table Manipulation

- View Creation and Manipulation

- Domain Creation and Manipulation

- Trigger Creation

- Routine Creation

- Sequence Creation

- SQL Procedure Statement

- Other Schema Objects Creation and Alteration

- The Information Schema

- 4. Built In Functions

- 5. Data Access and Change

- 6. Sessions and Transactions

- 7. Text Tables

- 8. Access Control

- 9. SQL-Invoked Routines

-

- Overview

- Routine Definition

- SQL Language Routines (PSM)

-

- Advantages and Disadvantages

- Routine Statements

- Compound Statement

- Table Variables

- Variables

- Cursors

- Handlers

- Assignment Statement

- Select Statement : Single Row

- Formal Parameters

- Iterated Statements

- Iterated FOR Statement

- Conditional Statements

- Return Statement

- Control Statements

- Raising Exceptions

- Routine Polymorphism

- Returning Data From Procedures

- Recursive Routines

- Java Language Routines (SQL/JRT)

- User-Defined Aggregate Functions

- 10. Triggers

- 11. System Management

-

- Modes of Operation

- Indexes and Query Speed

- Query Processing and Optimisation

- ACID, Persistence and Reliability

- Temporal System-Versioned Tables

- Replicated Databases

- Using Table Spaces

- Checking Database Tables and Indexes

- Backing Up and Restoring Database Catalogs

- Encrypted Databases

- Monitoring Database Operations

- Database Security

- Statements

- 12. Deployment Guide

- 13. Compatibility With Other DBMS

- 14. Properties

- 15. HyperSQL Network Listeners (Servers)

- 16. HyperSQL on UNIX

- 17. HyperSQL via ODBC

- A. Lists of Keywords

- B. HyperSQL Database Files and Recovery

- C. Building HSQLDB Jars

- D. HyperSQL with OpenOffice

- E. HyperSQL File Links

- SQL Index

- General Index

List of Tables

- 1. Available formats of this document

- 2.1. List of SQL types

- 4.1. TO_CHAR (number) format elements

- 4.2. TO_CHAR, TO_DATE and TO_TIMESTAMP format elements

- 14.1. Memory Database URL

- 14.2. File Database URL

- 14.3. Resource Database URL

- 14.4. Server Database URL

- 14.5. User and Password

- 14.6. Closing old ResultSet when Statement is reused

- 14.7. Column Names in JDBC ResultSet

- 14.8. In-memory LOBs from JDBC ResultSet

- 14.9. Empty batch in JDBC PreparedStatement

- 14.10. Automatic Shutdown

- 14.11. OpenOffice and Libre Office usage

- 14.12. Validity Check Property

- 14.13. Creating New Database Check Property

- 14.14. Execution of Multiple SQL Statements etc.

- 14.15. SQL Keyword Use as Identifier

- 14.16. SQL Keyword Starting with the Underscore or Containing Dollar Characters

- 14.17. Reference to Columns Names

- 14.18. String Size Declaration

- 14.19. Truncation of trailing spaces from string

- 14.20. Type Enforcement in Comparison and Assignment

- 14.21. Foreign Key Triggered Data Change

- 14.22. Use of LOB for LONGVAR Types

- 14.23. Type of string literals in CASE WHEN

- 14.24. Concatenation with NULL

- 14.25. NULL in Multi-Column UNIQUE Constraints

- 14.26. Truncation or Rounding in Type Conversion

- 14.27. Decimal Scale of Division and AVG Values

- 14.28. Support for NaN values

- 14.29. Sort order of NULL values

- 14.30. Sort order of NULL values with DESC

- 14.31. String Comparison with Padding

- 14.32. Default Locale Language Collation

- 14.33. Case-Insensitive Varchar columns

- 14.34. Lowercase column identifiers in ResultSet

- 14.35. Storage of Live Java Objects

- 14.36. Names of System Indexes Used for Constraints

- 14.37. DB2 Style Syntax

- 14.38. MSSQL Style Syntax

- 14.39. MySQL Style Syntax

- 14.40. Oracle Style Syntax

- 14.41. PostgreSQL Style Syntax

- 14.42. Maximum Iterations of Recursive Queries

- 14.43. Default Table Type

- 14.44. Transaction Control Mode

- 14.45. Default Isolation Level for Sessions

- 14.46. Transaction Rollback in Deadlock

- 14.47. Transaction Rollback on Interrupt

- 14.48. Interval Types

- 14.49. Temporary Result Rows in Memory

- 14.50. Opening Database as Read Only

- 14.51. Opening Database Without Modifying the Files

- 14.52. Event Logging

- 14.53. SQL Logging

- 14.54. Table Spaces for Cached Tables

- 14.55. Huge database files and tables

- 14.56. Use of NIO for Disk Table Storage

- 14.57. Use of NIO for Disk Table Storage

- 14.58. Internal Backup of the .data File

- 14.59. Unused Space Recovery

- 14.60. Rows Cached In Memory

- 14.61. Size of Rows Cached in Memory

- 14.62. Size Scale of Disk Table Storage

- 14.63. Size Scale of LOB Storage

- 14.64. Compression of BLOB and CLOB data

- 14.65. Use of Lock File

- 14.66. Logging Data Change Statements

- 14.67. Automatic Checkpoint Frequency

- 14.68. Automatic Defrag at Checkpoint

- 14.69. Compression of the .script file

- 14.70. Logging Data Change Statements Frequency

- 14.71. Logging Data Change Statements Frequency

- 14.72. Recovery Log Processing

- 14.73. Default Properties for TEXT Tables

- 14.74. Forcing Garbage Collection

- 14.75. Crypt Property For LOBs

- 14.76. Cipher Key for Encrypted Database

- 14.77. Cipher Initialization Vector for Encrypted Database

- 14.78. Crypt Provider Encrypted Database

- 14.79. Cipher Specification for Encrypted Database

- 14.80. Logging Framework

- 14.81. Text Tables

- 14.82. Java Functions

- 15.1. common server and webserver properties

- 15.2. server properties

- 15.3. webserver properties

- 17.1. Settings List

List of Examples

- 1.1. Java code to connect to the local hsql Server

- 1.2. Java code to connect to the local http Server

- 1.3. Java code to connect to the local secure SSL hsqls: and https: Servers

- 1.4. specifying a connection property to shutdown the database when the last connection is closed

- 1.5. specifying a connection property to disallow creating a new database

- 3.1. inserting the next sequence value into a table row

- 3.2. numbering returned rows of a SELECT in sequential order

- 3.3. using the last value of a sequence

- 3.4. Column values which satisfy a 2-column UNIQUE constraint

- 6.1. User-defined Session Variables

- 6.2. User-defined Temporary Session Tables

- 6.3. Setting Transaction Characteristics

- 6.4. Locking Tables

- 6.5. Rollback

- 6.6. Setting Session Characteristics

- 6.7. Setting Session Authorization

- 6.8. Setting Session Time Zone

- 11.1. Using CACHED tables for the LOB schema

- 11.2. Creating a system-versioned table

- 11.3. Displaying DbBackup Syntax

- 11.4. Offline Backup Example

- 11.5. Listing a Backup with DbBackup

- 11.6. Restoring a Backup with DbBackup

- 11.7. SQL Log Example

- 11.8. Finding foreign key rows with no parents after a bulk import

- 12.1. Using CACHED tables for the LOB schema

- 12.2. MainInvoker Example

- 12.3. Sample Range Ivy Dependency

- 12.4. Sample Range Maven Dependency

- 12.5. Sample Range Gradle Dependency

- 12.6. Sample Range ivy.xml loaded by Ivyxml plugin

- 12.7. Sample Range Groovy Dependency, using Grape

- 15.1. Exporting certificate from the server's keystore

- 15.2. Adding a certificate to the client keystore

- 15.3. Specifying your own trust store to a JDBC client

- 15.4. Getting a pem-style private key into a JKS keystore

- 15.5. Validating and Testing an ACL file

- 16.1. example sqltool.rc stanza

- C.1. Buiding the standard HSQLDB jar file with Ant

- C.2. Example source code before CodeSwitcher is run

- C.3. CodeSwitcher command line invocation

- C.4. Source code after CodeSwitcher processing

Table of Contents

HyperSQL DataBase (HSQLDB) is a modern relational database system that conforms closely to the SQL:2023 Standard and JDBC 4.3 specifications. It supports all core features and many optional features of SQL:2023.

The first versions of HSQLDB were released in 2001. Version 2, first released in 2010, was a complete rewrite of most parts of the database engine.

This documentation covers HyperSQL version 2.7.4 and has been regularly improved and updated. The latest, updated version can be found at http://hsqldb.org/doc/2.0/

If you notice any mistakes in this document, or if you have problems with the procedures themselves, please use the HSQLDB support facilities which are listed at http://hsqldb.org/support

This document is available in several formats.

You may be reading this document right now at http://hsqldb.org/doc/2.0, or in a distribution somewhere else. I hereby call the document distribution from which you are reading this, your current distro.

http://hsqldb.org/doc/2.0 hosts the latest production versions of all available formats. If you want a different format of the same version of the document you are reading now, then you should try your current distro. If you want the latest production version, you should try http://hsqldb.org/doc/2.0.

Sometimes, distributions other than http://hsqldb.org/doc/2.0 do not host all available formats. So, if you can't access the format that you want in your current distro, you have no choice but to use the newest production version at http://hsqldb.org/doc/2.0.

Table 1. Available formats of this document

| format | your distro | at http://hsqldb.org/doc/2.0 |

|---|---|---|

| Chunked HTML | index.html | http://hsqldb.org/doc/2.0/guide/ |

| All-in-one HTML | guide.html | http://hsqldb.org/doc/2.0/guide/guide.html |

| guide.pdf | http://hsqldb.org/doc/2.0/guide/guide.pdf |

If you are reading this document now with a standalone PDF reader,

your distro links may not work.

$Revision: 6787 $

Copyright 2002-2024 Fred Toussi. Permission is granted to distribute this document without any alteration under the terms of the HSQLDB license. Additional permission is granted to the HSQL Development Group to distribute this document with or without alterations under the terms of the HSQLDB license.

2024-10-25

Table of Contents

HyperSQL Database (HSQLDB) is a modern relational database system. Version 2.7.4 is the latest release of the all-new version 2 code. Written from ground up to follow the international ISO SQL:2023 standard, it supports the complete set of the classic features of the SQL Standard, together with optional features such as stored procedures and triggers.

HyperSQL version 2.7.4 is compatible with Java 11 or later and supports the Java module system. A version of the HSQLDB jar compiled with JDK 8 is also included in the download zip package. These jars are also available from Maven repositories.

HyperSQL is used for development, testing and deployment of database applications.

SQL Standard compliance is the most unique characteristic of HyperSQL.

There are several other distinctive features. HyperSQL can provide database access within the user's application process, within an application server, or as a separate server process. HyperSQL can run entirely in memory using a fast memory structure. HyperSQL can use disk persistence in a flexible way, with reliable crash-recovery. HyperSQL is the only open-source relational database management system with a high-performance dedicated lob storage system, suitable for gigabytes of lob data. It is also the only relational database that can create and access large comma delimited files as SQL tables. HyperSQL supports three live switchable transaction control models, including fully multi-threaded MVCC, and is suitable for high performance transaction processing applications. HyperSQL is also suitable for business intelligence, ETL and other applications that process large data sets. HyperSQL has a wide range of enterprise deployment options, such as XA transactions, connection pooling data sources and remote authentication.

New SQL syntax compatibility modes have been added to HyperSQL. These modes allow a high degree of compatibility with several other database systems which use non-standard SQL syntax.

HyperSQL is written in the Java programming language and runs in a Java virtual machine (JVM). It supports the JDBC interface for database access.

The ODBC driver for PostgreSQL can be used with HSQLDB.

This guide covers the database engine features, SQL syntax and different modes of operation. The JDBC interfaces, pooling and XA components are documented in the JavaDoc. Utilities such as SqlTool and DatabaseManagerSwing are covered in a separate Utilities Guide.

The HSQLDB jar package, hsqldb.jar, is located in the /lib directory of the ZIP package and contains several components and programs.

Components of the HSQLDB jar package

-

HyperSQL RDBMS Engine (HSQLDB)

-

HyperSQL JDBC Driver

-

DatabaseManagerSwing GUI database access tool

The HyperSQL RDBMS and JDBC Driver provide the core functionality. DatabaseManagerSwing is a database access tool that can be used with any database engine that has a JDBC driver.

An additional jar, sqltool.jar, contains SqlTool, a command line database access tool that can also be used with other database engines.

The access tools are used for interactive user access to databases,

including creation of a database, inserting or modifying data, or querying

the database. All tools are run in the normal way for Java programs. In

the following example the Swing version of the Database Manager is

executed. The hsqldb.jar is located in the directory

../lib relative to the current directory.

java -cp ../lib/hsqldb.jar org.hsqldb.util.DatabaseManagerSwing

If hsqldb.jar is in the current directory, the

command would change to:

java -cp hsqldb.jar org.hsqldb.util.DatabaseManagerSwing

Main class for the HSQLDB tools

-

org.hsqldb.util.DatabaseManagerSwing

When a tool is up and running, you can connect to a database (may be a new database) and use SQL commands to access and modify the data.

Tools can use command line arguments. You can add the command line argument --help to get a list of available arguments for these tools.

Double clicking the HSQLDB jar will start the DatabaseManagerSwing application.

Each HyperSQL database is called a catalog. There are three types of catalog depending on how the data is stored.

Types of catalog data

-

mem: stored entirely in RAM - without any persistence beyond the JVM process's life

-

file: stored in file system

-

res: stored in a Java resource, such as a Jar and always read-only

All-in-memory mem: catalogs can be used for test data or as sophisticated caches for an application. These databases do not have any files.

A file: catalog consists of between 2 to 6 files, all named the same but with different extensions, located in the same directory. For example, the database named "testdb" consists of the following files:

-

testdb.properties -

testdb.script -

testdb.log -

testdb.data -

testdb.backup -

testdb.lobs

The properties file contains a few settings about the database. The

script file contains the definition of tables and other database objects,

plus the data for memory tables. The log file contains recent changes to

the database. The data file contains the data for cached tables and the

backup file is used to revert to the last known consistent state of the

data file. All these files are essential and should never be deleted. For

some catalogs, the testdb.data and

testdb.backup files will not be present. In addition

to those files, a HyperSQL database may link to any formatted text files,

such as CSV lists, anywhere on the disk.

While the "testdb" catalog is open, a

testdb.log file is used to write the changes made to

data. This file is removed at a normal SHUTDOWN. Otherwise (with abnormal

shutdown) this file is used at the next startup to redo the changes. A

testdb.lck file is also used to record the fact that

the database is open. This is deleted at a normal SHUTDOWN.

![[Note]](../images/db/note.png) | Note |

|---|---|

|

When the engine closes the database at a shutdown, it creates

temporary files with the extension |

A res: catalog consists of the files for a small, read-only database that can be stored inside a Java resource such as a ZIP or JAR archive and distributed as part of a Java application program.

In general, JDBC is used for all access to databases. This is done

by making a connection to the database, then using various methods of the

java.sql.Connection object that is returned to

access the data. Access to an in-process database

is started from JDBC, with the database path specified in the connection

URL. For example, if the file: database name is

"testdb" and its files are located in the same directory as where the

command to run your application was issued, the following code is used for

the connection:

Connection c = DriverManager.getConnection("jdbc:hsqldb:file:testdb", "SA", "");

The database file path format can be specified using forward slashes

in Windows hosts as well as Linux hosts. So relative paths or paths that

refer to the same directory on the same drive can be identical. For

example if your database directory in Linux is

/opt/db/ containing a database testdb (with files

named testdb.*), then the database file path is

/opt/db/testdb. If you create an identical directory

structure on the C: drive of a Windows host, you can

use the same URL in both Windows and Linux:

Connection c = DriverManager.getConnection("jdbc:hsqldb:file:/opt/db/testdb", "SA", "");

When using relative paths, these paths will be taken relative to the

directory in which the shell command to start the Java Virtual Machine was

executed. Refer to the Javadoc for JDBCConnection for more

details.

Paths and database names for file databases are treated as case-sensitive when the database is created or the first connection is made to the database. But if a second connection is made to an open database, using a path and name that differs only in case, then the connection is made to the existing open database. This measure is necessary because in Windows the two paths are equivalent.

A mem: database is specified by the mem: protocol. For mem: databases, the path is simply a name. Several mem: databases can exist at the same time and distinguished by their names. In the example below, the database is called "mymemdb":

Connection c = DriverManager.getConnection("jdbc:hsqldb:mem:mymemdb", "SA", "");

A res: database, is specified by the res: protocol. As it is a Java resource, the database path is a Java URL (similar to the path to a class). In the example below, "resdb" is the root name of the database files, which exists in the directory "org/my/path" within the classpath (probably in a Jar). A Java resource is stored in a compressed format and is decompressed in memory when it is used. For this reason, a res: database should not contain large amounts of data and is always read-only.

Connection c = DriverManager.getConnection("jdbc:hsqldb:res:org.my.path.resdb", "SA", "");

The first time in-process connection is made to a database, some general data structures are initialised and a helper thread is started. After this, creation of connections and calls to JDBC methods of the connections execute as if they are part of the Java application that is making the calls. When the SQL command "SHUTDOWN" is executed, the global structures and helper thread for the database are destroyed.

Note that only one Java process at a time can make in-process connections to a given file: database. However, if the file: database has been made read-only, or if connections are made to a res: database, then it is possible to make in-process connections from multiple Java processes.

For most applications, in-process access is faster, as the data is not converted and sent over the network. The main drawback is that it is not possible by default to connect to the database from outside your application. As a result you cannot check the contents of the database with external tools such as Database Manager while your application is running.

Server modes provide the maximum accessibility. The database engine runs in a JVM and opens one or more in-process catalogs. It listens for connections from programs on the same computer or other computers on the network. It translates these connections into in-process connections to the databases.

Several different programs can connect to the server and retrieve or update information. Applications programs (clients) connect to the server using the HyperSQL JDBC driver. In most server modes, the server can serve an unlimited number of databases that are specified at the time of running the server, or optionally, as a connection request is received.

A Sever mode is also the preferred mode of running the database during development. It allows you to query the database from a separate database access utility while your application is running.

There are three server modes, based on the protocol used for communications between the client and server. They are briefly discussed below. More details on servers is provided in the HyperSQL Network Listeners (Servers) chapter.

This is the preferred way of running a database server and the fastest one. A proprietary communications protocol is used for this mode. A command similar to those used for running tools and described above is used for running the server. The following example of the command for starting the server starts the server with one (default) database with files named "mydb.*" and the public name of "xdb". The public name hides the file names from users.

java -cp ../lib/hsqldb.jar org.hsqldb.server.Server --database.0 file:mydb --dbname.0 xdb

The command line argument --help can be used to

get a list of available arguments. Connections are made using an hsql:

URL.

Connection c = DriverManager.getConnection("jdbc:hsqldb:hsql://localhost/xdb", "SA", "");

This method of access is used when the computer hosting the database server is restricted to the HTTP protocol. The only reason for using this method of access is restrictions imposed by firewalls on the client or server machines and it should not be used where there are no such restrictions. The HyperSQL HTTP Server is a special web server that allows JDBC clients to connect via HTTP. The server can also act as a small general-purpose web server for static pages.

To run an HTTP server, replace the main class for the server in the example command line above with WebServer:

java -cp ../lib/hsqldb.jar org.hsqldb.server.WebServer --database.0 file:mydb --dbname.0 xdb

The command line argument --help can be used to

get a list of available arguments. Connections are made using an http:

URL.

Connection c = DriverManager.getConnection("jdbc:hsqldb:http://localhost/xdb", "SA", "");

This method of access also uses the HTTP protocol. It is used when

a servlet engine (or application server) such as Tomcat or Resin

provides access to the database. The Servlet Mode cannot be started

independently from the servlet engine. The Servlet

class, in the HSQLDB jar, should be installed on the application server

to provide the connection. The database file path is specified using an

application server property. Refer to the source file

src/org/hsqldb/server/Servlet.java to see the details.

Both HTTP Server and Servlet modes can be accessed using the JDBC driver at the client end. They do not provide a web front end to the database. The Servlet mode can serve multiple databases.

Please note that you do not normally use this mode if you are using the database engine in an application server. In this situation, connections to a catalog are usually made in-process, or using the hsql: protocol to an HSQL Server

When a HyperSQL server is running, client programs can connect to

it using the HSQLDB JDBC Driver contained in

hsqldb.jar. Full information on how to connect to a

server is provided in the Java Documentation for JDBCConnection

(located in the /doc/apidocs directory of HSQLDB

distribution). A common example is connection to the default port (9001)

used for the hsql: protocol on the same

machine:

Example 1.1. Java code to connect to the local hsql Server

try {

Class.forName("org.hsqldb.jdbc.JDBCDriver" );

} catch (Exception e) {

System.err.println("ERROR: failed to load HSQLDB JDBC driver.");

e.printStackTrace();

return;

}

Connection c = DriverManager.getConnection("jdbc:hsqldb:hsql://localhost/xdb", "SA", "");

If the HyperSQL HTTP server is used, the protocol is http: and the URL will be different:

Example 1.2. Java code to connect to the local http Server

Connection c = DriverManager.getConnection("jdbc:hsqldb:http://localhost/xdb", "SA", "");

Note in the above connection URL, there is no mention of the database file, as this was specified when running the server. Instead, the public name defined for dbname.0 is used. Also, see the HyperSQL Network Listeners (Servers) chapter for the connection URL when there is more than one database per server instance.

When a HyperSQL server is run, network access should be adequately protected. Source IP addresses may be restricted by use of our Access Control List feature, network filtering software, firewall software, or standalone firewalls. Only secure passwords should be used-- most importantly, the password for the default system user should be changed from the default empty string. If you are purposefully providing data to the public, then the wide-open public network connection should be used exclusively to access the public data via read-only accounts. (i.e., neither secure data nor privileged accounts should use this connection). These considerations also apply to HyperSQL servers run with the HTTP protocol.

HyperSQL provides two optional security mechanisms. The encrypted SSL protocol, and Access Control Lists. Both mechanisms can be specified when running the Server or WebServer. On the client, the URL to connect to an SSL server is slightly different:

Example 1.3. Java code to connect to the local secure SSL hsqls: and https: Servers

Connection c = DriverManager.getConnection("jdbc:hsqldb:hsqls://localhost/xdb", "SA", "");

Connection c = DriverManager.getConnection("jdbc:hsqldb:https://localhost/xdb", "SA", "");

The security features are discussed in detail in the HyperSQL Network Listeners

(Servers)

chapter.

A server can provide connections to more than one database. In the

examples above, more than one set of database names can be specified on

the command line. It is also possible to specify all the databases in a

.properties file, instead of the command line. These

capabilities are covered in the HyperSQL Network Listeners

(Servers) chapter

As shown so far, a java.sql.Connection object

is always used to access the database. But performance depends on the type

of connection and how it is used.

Establishing a connection and closing it has some overheads, therefore it is not good practice to create a new connection to perform a small number of operations. A connection should be reused as much as possible and closed only when it is not going to be used again for a long while.

Reuse is more important for server connections. A server connection uses a TCP port for communications. Each time a connection is made, a port is allocated by the operating system and deallocated after the connection is closed. If many connections are made from a single client, the operating system may not be able to keep up and may refuse the connection attempt.

A java.sql.Connection object has some methods

that return further java.sql.* objects. All these

objects belong to the connection that returned them and are closed when

the connection is closed. These objects, listed below, can be reused. But

if they are not needed after performing the operations, they should be

closed.

A java.sql.DatabaseMetaData object is used to

get metadata for the database.

A java.sql.Statement object is used to

execute queries and data change statements. A single

java.sql.Statement can be reused to execute a

different statement each time.

A java.sql.PreparedStatement object is used

to execute a single statement repeatedly. The SQL statement usually

contains parameters, which can be set to new values before each reuse.

When a java.sql.PreparedStatement object is

created, the engine keeps the compiled SQL statement for reuse, until the

java.sql.PreparedStatement object is closed. As a

result, repeated use of a

java.sql.PreparedStatement is much faster than

using a java.sql.Statement object.

A java.sql.CallableStatement object is used

to execute an SQL CALL statement. The SQL CALL statement may contain

parameters, which should be set to new values before each reuse. Similar

to java.sql.PreparedStatement, the engine keeps the

compiled SQL statement for reuse, until the

java.sql.CallableStatement object is closed.

A java.sql.Connection object also has some

methods for transaction control.

The commit() method performs a

COMMIT while the rollback()

method performs a ROLLBACK SQL statement.

The setSavepoint(String name) method

performs a SAVEPOINT <name> SQL statement and

returns a java.sql.Savepoint object. The

rollback(Savepoint name) method performs a

ROLLBACK TO SAVEPOINT <name> SQL

statement.

The Javadoc for JDBCConnection,

JDBCDriver,

JDBCDatabaseMetadata,

JDBCResultSet,

JDBCStatement,

JDBCPreparedStatement

list all the supported JDBC methods together with information that is

specific to HSQLDB.

All databases running in different modes can be closed with the SHUTDOWN command, issued as an SQL statement.

When SHUTDOWN is issued, all active transactions are rolled back. The catalog files are then saved in a form that can be opened quickly the next time the catalog is opened.

A special form of closing the database is via the SHUTDOWN COMPACT

command. This command rewrites the .data file that

contains the information stored in CACHED tables and compacts it to its

minimum size. This command should be issued periodically, especially when

lots of inserts, updates, or deletes have been performed on the cached

tables. Changes to the structure of the database, such as dropping or

modifying populated CACHED tables or indexes also create large amounts of

unused file space that can be reclaimed using this command.

Databases are not closed when the last connection to the database is

explicitly closed via JDBC. A connection property,

shutdown=true, can be specified on the first connection

to the database (the connection that opens the database) to force a

shutdown when the last connection closes.

Example 1.4. specifying a connection property to shutdown the database when the last connection is closed

Connection c = DriverManager.getConnection(

"jdbc:hsqldb:file:/opt/db/testdb;shutdown=true", "SA", "");

This feature is useful for running tests, where it may not be

practical to shutdown the database after each test. But it is not

recommended for application programs.

When a server instance is started, or when a connection is made to an in-process database, a new, empty database is created if no database exists at the given path.

With HyperSQL 2.0 the user name and password that are specified for the connection are used for the new database. Both the user name and password are case-sensitive. (The exception is the default SA user, which is not case-sensitive). If no user name or password is specified, the default SA user and an empty password are used.

This feature has a side effect that can confuse new users. If a

mistake is made in specifying the path for connecting to an existing

database, a connection is nevertheless established to a new database. For

troubleshooting purposes, you can specify a connection property

ifexists=true to allow connection

to an existing database only and avoid creating a new database. In this

case, if the database does not exist, the

getConnection() method will throw an

exception.

Example 1.5. specifying a connection property to disallow creating a new database

Connection c = DriverManager.getConnection(

"jdbc:hsqldb:file:/opt/db/testdb;ifexists=true", "SA", "");

A database has many optional properties, described in the System Management chapter. You can specify most of these properties on the URL or in the connection properties for the first connection that creates the database. See the Properties chapter.

$Revision: 6765 $

Copyright 2002-2024 Fred Toussi. Permission is granted to distribute this document without any alteration under the terms of the HSQLDB license. Additional permission is granted to the HSQL Development Group to distribute this document with or without alterations under the terms of the HSQLDB license.

2024-10-25

Table of Contents

- SQL Standards Support

-

- Definition Statements (DDL and others)

- Data Manipulation Statements (DML)

- Data Query Statements (DQL)

- Calling User Defined Procedures and Functions

- Setting Properties for the Database and the Session

- General Operations on Database

- Transaction Statements

- Comments in Statements

- Statements in SQL Routines

- SQL Data and Tables

- Short Guide to Data Types

- Data Types and Operations

- Datetime types

- Interval Types

- Arrays

The SQL language consists of statements for different operations. HyperSQL 2.x supports the dialect of SQL defined progressively by ISO (also ANSI) SQL standards 92, 1999, 2003, 2008, 2011, 2016 and 2023. This means the syntax specified by the Standard text is accepted for any supported operation. Almost all features of SQL-92 up to Advanced Level are supported, as well as the additional features that make up the SQL:2023 core and many optional features of this standard.

At the time of this release, HyperSQL supports the widest range of SQL Standard features among all open source RDBMS.

Various chapters of this guide list the supported syntax. When writing or converting existing SQL DDL (Data Definition Language), DML (Data Manipulation Language) or DQL (Data Query Language) statements for HSQLDB, you should consult the supported syntax and modify the statements accordingly.

Over 300 words are reserved by the Standard and should not be used

as table or column names. For example, the word POSITION is reserved as it

is a function defined by the Standards with a similar role as

String.indexOf(String) in Java. By default,

HyperSQL does not prevent you from using a reserved word if it does not

support its use or can distinguish it. For example, CUBE is a reserved

word for a feature that is supported by HyperSQL from version 2.5.1.

Before this version, CUBE was allowed as a table or column name but it is

no longer allowed. You should avoid using such names as future versions of

HyperSQL are likely to support the reserved words and may reject your

table definitions or queries. The full list of SQL reserved words is in

the appendix Lists of Keywords. You

can set a property to disallow the use of reserved keywords for names of

tables and other database objects. There are several other user-defined

properties to control the strict application of the SQL Standard in

different areas.

If you have to use a reserved keyword as the name of a database object, you can enclose it in double quotes.

HyperSQL also supports enhancements with keywords and expressions

that are not part of the SQL standard. Expressions such as SELECT

TOP 5 FROM .., SELECT LIMIT 0 10 FROM ... or

DROP TABLE mytable IF EXISTS are among such

constructs.

Many books cover SQL Standard syntax and can be consulted.

In HyperSQL version 2, all features of JDBC4 that apply to the

capabilities of HSQLDB are fully supported. The relevant JDBC classes are

thoroughly documented with additional clarifications and HyperSQL specific

comments. See the JavaDoc for the

org.hsqldb.jdbc.* classes.

The following sections list the keywords that start various SQL statements grouped by their function.

Definition statements create, modify, or remove database objects. Tables and views are objects that contain data. There are other types of objects that do not contain data. These statements are covered in the Schemas and Database Objects chapter.

CREATE

Followed by { SCHEMA | TABLE | VIEW | SEQUENCE | PROCEDURE | FUNCTION | USER | ROLE | ... }, the keyword is used to create the database objects.

ALTER

Followed by the same keywords as CREATE, the keyword is used to modify the object.

DROP

Followed by the same keywords as above, the keyword is used to remove the object. If the object contains data, the data is removed too.

GRANT

Followed by the name of a role or privilege, the keyword assigns a role or gives permissions to a USER or role.

REVOKE

Followed by the name of a role or privilege, REVOKE is the opposite of GRANT.

COMMENT ON

Followed by the same keywords as CREATE, the keyword is used to add a text comment to TABLE, VIEW, COLUMN, ROUTINE, and TRIGGER objects.

EXPLAIN REFERENCES

These keywords are followed by TO or FROM to list the other database objects that reference the given object, or vice versa.

DECLARE

This is used for declaring temporary session tables and variables.

Data manipulation statements add, update, or delete data in tables and views. These statements are covered in the Data Access and Change chapter.

INSERT

Inserts one or more rows into a table or view.

UPDATE

Updates one or more rows in a table or view.

DELETE

Deletes one or more rows from a table or view.

TRUNCATE

Deletes all the rows in a table.

MERGE

Performs a conditional INSERT, UPDATE or DELETE on a table or view using the data given in the statement.

Data query statements retrieve and combine data from tables and views and return result sets. These statements are covered in the Data Access and Change chapter.

SELECT

Returns a result set formed from a subset of rows and columns in one or more tables or views.

VALUES

Returns a result set formed from constant values.

WITH ...

This keyword starts a series of SELECT statements that form a query. The first SELECTs act as subqueries for the final SELECT statement in the same query.

EXPLAIN PLAN

These keywords are followed by the full text of any DQL or DML statement. The result set shows the anatomy of the given DQL or DML statement, including the indexes used to access the tables.

CALL

Calls a procedure or function. Calling a function can return a result set or a value, while calling a procedure can return one or more result sets and values at the same time. This statement is covered in the SQL-Invoked Routines chapter.

SET

The SET statement has many variations and is used for setting the values of the general properties of the database or the current session. Usage of the SET statement for the database is covered in the System Management chapter. Usage for the session is covered in the Sessions and Transactions chapter.

General operations on the database include backup, checkpoint, and other operations. These statements are covered in detail in the System Management chapter.

BACKUP

Creates a backup of the database in a target directory.

PERFORM

Includes commands to export and import SQL scripts from / to the database. Also includes a command to check the consistency of the indexes.

SCRIPT

Creates a script of SQL statements that creates the database objects and settings.

CHECKPOINT

Saves all the changes to the database up to this point to disk files.

SHUTDOWN

Shuts down the database after saving all the changes.

These statements are used in a session to start, end or control transactions. They are covered in the Sessions and Transactions chapter.

START TRANSACTION

This statement initiates a new transaction with the given transaction characteristics

SET TRANSACTION

Introduces one of more characteristics for the next transaction.

COMMIT

Commits the changes to data made in the current transaction.

ROLLBACK

Rolls back the changes to data made in the current transaction. It is also possible to roll back to a savepoint.

SAVEPOINT

Records a point in the current transaction so that future changes can be rolled back to this point.

RELEASE SAVEPOINT

Releases an existing savepoint.

LOCK

Locks a set of tables for transaction control.

CONNECT

Starts a new session and continues operations in this session.

DISCONNECT

Ends the current session.

Any SQL statement can include comments. The comments are stripped before the statement is executed.

SQL style line comments start with two dashes

-- and extend to the end of the line.

C style comments can cover part of the line or multiple lines.

They start with /* and end with

*/.

The body of user-defined SQL procedures and functions (collectively called routines) may contain several other types of statements and keywords in addition to DML and DQL statements. These include: BEGIN and END for blocks; FOR, WHILE and REPEAT loops; IF, ELSE and ELSEIF blocks; SIGNAL and RESIGNAL statements for handling exceptions.

These statements are covered in detail in the SQL-Invoked Routines chapter.

All data is stored in tables. Therefore, creating a database requires defining the tables and their columns. The SQL Standard supports temporary tables, which are for temporary data managed by each session, and permanent base tables, which are for persistent data shared by different sessions.

A HyperSQL database can be an all-in-memory mem: database with no automatic persistence, or a file-based, persistent file: database.

Standard SQL is not case sensitive, except when names of objects

are enclosed in double-quotes. SQL keywords can be written in any case;

for example, sElect, SELECT and

select are all allowed and converted to uppercase.

Identifiers, such as names of tables, columns and other objects defined

by the user, are also converted to uppercase. For example,

myTable, MyTable and

MYTABLE all refer to the same table and are stored in

the database in the case-normal form, which is all uppercase for

unquoted identifiers. When the name of an object is enclosed in double

quotes when it is created, the exact name is used as the case-normal

form and it must be referenced with the exact same double-quoted string.

For example, "myTable" and

"MYTABLE" are different tables. When the

double-quoted name is all-uppercase, it can be referenced in any case;

"MYTABLE" is the same as myTable

and MyTable because they are all converted to

MYTABLE.

HyperSQL supports the Standard definition of persistent base table, but defines three types according to the way the data is stored. These are MEMORY tables, CACHED tables, and TEXT tables.

Memory tables are the default type when the CREATE TABLE command

is used. Their data is held entirely in memory. In file-based databases,

MEMORY tables are persistent and any change to their structure or

contents is written to the *.log and

*.script files. The *.script

file and the *.log file are read the next time the

database is opened, and the MEMORY tables are recreated with all the

data. This process may take a long time if the database is larger than

tens of megabytes. When the database is shutdown, all the data is

saved.

CACHED tables are created with the CREATE CACHED TABLE command. Only part of their data or indexes is held in memory, allowing large tables that would otherwise take up to several hundred megabytes of memory. Another advantage of cached tables is that the database engine takes less time to start up when a cached table is used for large amounts of data. The disadvantage of cached tables is a reduction in speed. Do not use cached tables if your data set is relatively small. In an application with some small tables and some large ones, it is better to use the default, MEMORY mode for the small tables.

TEXT tables use a CSV (Comma Separated Value) or other delimited text file as the source of their data. You can specify an existing CSV file, such as a dump from another database or program, as the source of a TEXT table. Alternatively, you can specify an empty file to be filled with data by the database engine. TEXT tables are efficient in memory usage as they cache only part of the text data and all of the indexes. The Text table data source can always be reassigned to a different file if necessary. The commands are needed to set up a TEXT table as detailed in the Text Tables chapter.

With all-in-memory mem: databases, both MEMORY table and CACHED table declarations are treated as declarations for MEMORY tables which last only for the duration of the Java process. In the latest versions of HyperSQL, TEXT table declarations are allowed in all-in-memory databases.

The default type of tables resulting from future CREATE TABLE statements can be specified with the SQL command:

SET DATABASE DEFAULT TABLE TYPE { CACHED | MEMORY };

The type of an existing table can be changed with the SQL command:

SET TABLE <table name> TYPE { CACHED | MEMORY };

SQL statements such as INSERT or SELECT access different types of tables uniformly. No change to statements is needed to access different types of table.

Data in TEMPORARY tables is not saved and lasts only for the lifetime of the session. The contents of each TEMP table are visible only from the session that is used to populate it.

HyperSQL supports two types of temporary tables.

The GLOBAL TEMPORARY type is a schema object.

It is created with the CREATE GLOBAL TEMPORARY TABLE

statement. The definition of the table persists, and each session has

access to the table. But each session sees its own copy of the table,

which is empty at the beginning of the session.

The LOCAL TEMPORARY type is not a schema

object. It is created with the DECLARE LOCAL TEMPORARY

TABLE statement. The table definition lasts only for the

duration of the session and is not persisted in the database. The table

can be declared in the middle of a transaction without committing the

transaction. If a schema name is needed to reference these tables in a

given SQL statement, the pseudo schema name SESSION

can be used.

When the session commits, the contents of all temporary tables are

cleared by default. If the table definition statement includes

ON COMMIT PRESERVE ROWS, then the contents are kept

when a commit takes place.

The rows in temporary tables are stored in memory by default. If

the hsqldb.result_max_memory_rows property has been

set or the SET SESSION RESULT MEMORY ROWS <row

count> has been specified, tables with row count above the

setting are stored on disk.

The SQL Standard is strongly typed and completely type-safe. It supports the following basic types, which are all supported by HyperSQL.

-

Numeric types TINYINT, SMALLINT, INTEGER and BIGINT are types with fixed binary precision. These types are more efficient to store and retrieve. NUMERIC and DECIMAL are types with user-defined decimal precision. They can be used with zero scale to store very large integers, or with a non-zero scale to store decimal fractions. The DOUBLE type is a 64-bit, approximate floating point types. HyperSQL even allows you to store infinity in this type.

-

The BOOLEAN type is for logical values and can hold TRUE, FALSE or UNKNOWN. Although HyperSQL allows you to use one and zero in assignment or comparison, you should use the standard values for this type.

-

Character string types are CHAR(L), VARCHAR(L) and CLOB (here, L stands for length parameter, an integer). CHAR is for fixed width strings and any string that is assigned to this type is padded with spaces at the end. If you use CHAR without the length L, then it is interpreted as a single character string. Do not use this type for general storage of strings. Use VARCHAR(L) for general strings. There are only memory limits and performance implications for the maximum length of VARCHAR(L). If the strings are larger than a few kilobytes, consider using CLOB. The CLOB types is a better choice for very long strings. Do not use this type for short strings as there are performance implications. By default LONGVARCHAR is a synonym for a long VARCHAR and can be used without specifying the size. You can set LONGVARCHAR to map to CLOB, with the sql.longvar_is_lob connection property or the SET DATABASE SQL LONGVAR IS LOB TRUE statement.

-

Binary string types are BINARY(L), VARBINARY(L) and BLOB. Do not use BINARY(L) unless you are storing fixed length binary strings. This type pads short binary strings with zero bytes. BINARY without the length L means a single byte. Use VARBINARY(L) for general binary strings, and BLOB for large binary objects. You should apply the same considerations as with the character string types. By default, LONGVARBINARY is a synonym for a long VARBINARY and can be used without specifying the size. You can set LONGVARBINARY to map to BLOB, with the sql.longvar_is_lob connection property or the SET DATABASE SQL LONGVAR IS LOB TRUE statement.

-

The BIT(L) and BITVARYING(L) types are for bit maps. Do not use them for other types of data. BIT without the length L argument means a single bit and is sometimes used as a logical type. Use BOOLEAN instead of this type.

-

The UUID type is for UUID (also called GUID) values. The value is stored as BINARY. UUID character strings, as well as BINARY strings, can be used to insert or to compare.

-

The datetime types DATE, TIME, and TIMESTAMP, together with their WITH TIME ZONE variations are available. Read the details in this chapter on how to use these types.

-

The INTERVAL type is very powerful when used together with the datetime types. This is very easy to use, but is supported mainly by enterprise database systems. Note that functions that add days or months to datetime values are not really a substitute for the INTERVAL type. Expressions such as

(datecol - 7 DAY) > CURRENT_DATEare optimised to use indexes when it is possible, while the equivalent function calls are not optimised. -

The OTHER type is for storage of Java objects. If your objects are large, serialize them in your application and store them as BLOB in the database.

-

The ARRAY type supports all base types except LOB and OTHER types. ARRAY data objects are held in memory while being processed. It is therefore not recommended to store more than about a thousand objects in an ARRAY in normal operations with disk-based databases. For specialised applications, use ARRAY with as many elements as your memory allocation can support.

HyperSQL 2.7 has several compatibility modes which allow the type names that are used by other RDBMS to be accepted and translated into the closest SQL Standard type. For example, the type TEXT, supported by MySQL and PostgreSQL is translated in these compatibility modes.

Table 2.1. List of SQL types

| Type | Description |

|---|---|

| TINYINT, SMALLINT, INT or INTEGER, BIGNIT | binary number types with 8, 16, 32, 64 bit precision respectively |

| DOUBLE or FLOAT | 64 bit precision floating point number |

| DECIMAL(P,S), DEC(P,S) or NUMERIC(P,S) | identical types for fixed precision number (*) |

| BOOLEAN | boolean type supports TRUE, FALSE and UNKNOWN |

| CHAR(L) or CHARACTER(L) | fixed-length UTF-16 string type - padded with space to length L (**) |

| VARCHCHAR(L) or CHARACTER VARYING(L) | variable-length UTF-16 string type (***) |

| CLOB(L) | variable-length UTF-16 long string type (***) |

| LONGVARCHAR(L) | a non-standard synonym for VARCHAR(L) (***) |

| BINARY(L) | fixed-length binary string type - padded with zero to length L (**) |

| VARBINARY(L) or BINARY VARYING(L) | variable-length binary string type (***) |

| BLOB(L) | variable length binary string type (***) |

| LONGVARBINARY(L) | a non-standard synonym for VARBINARY(L) (***) |

| BIT(L) | fixed-length bit map - padded with 0 to length L - maximum value of L is 1024 |

| BIT VARYING(L) | variable-length bit map - maximum value of L is 1024 |

| UUID | 16 byte fixed binary type represented as UUID string |

| DATE | date |

| TIME(S) | time of day (****) |

| TIME(S) WITH TIME ZONE | time of day with zone displacement value (****) |

| TIMESTAMP(S) | date with time of day (****) |

| TIMESTAMP(S) WITH TIME ZONE | timestamp with zone displacement value (****) |

| INTERVAL | date or time interval - has many variants |

| OTHER | non-standard type for Java serializable object |

| ARRAY | array of a base type |

In the table above: (*) The parameters are optional. P is used for maximum precision and S for scale of DECIMAL and NUMERIC. If only P is used, S defaults to 0. If none is used, P defaults to 128 and S defaults to 0. The maximum value of each parameter is unlimited. (**) The parameter L is used for fixed length. If not used, it defaults to 1. (***) The parameter L is used for maximum length. It is optional. If not used, it defaults to 32K for VARCHAR and VARBINARY, 1G for BLOB or CLOB, and 16M for the LONGVARCHAR and LONGVARBINARY. The maximum value of the parameter is unlimited for CLOB and BLOB. It is 2 * 1024 *1024 *1024 for other string types. (****) The parameter S is optional and indicates sub-second fraction precision of time (0 to 9). When not used, it defaults to 6 for TIMESTAMP and 0 for TIME.

HyperSQL supports all the types defined by SQL-92, plus BOOLEAN, BINARY, ARRAY and LOB types that were later added to the SQL Standard. It also supports the non-standard OTHER type to store serializable Java objects.

SQL is a strongly typed language. All data stored in specific columns of tables and other objects (such as sequence generators) have specific types. Each data item conforms to the type limits such as precision and scale for the column. It also conforms to any additional integrity constraints that are defined as CHECK constraints in domains or tables. Types can be explicitly converted using the CAST expression, but in most expressions, they are converted automatically.

Data is returned to the user (or the application program) as a result of executing SQL statements such as query expressions or function calls. All statements are compiled prior to execution and the return type of the data is known after compilation and before execution. Therefore, once a statement is prepared, the data type of each column of the returned result is known, including any precision or scale property. The type does not change when the same query that returned one row, returns many rows as a result of adding more data to the tables.

Some SQL functions used within SQL statements are polymorphic, but the exact type of the argument and the return value is determined at compile time.

When a statement is prepared, using a JDBC

PreparedStatement object, it is compiled by the

engine and the type of the columns of its ResultSet

and / or its parameters are accessible through the methods of

PreparedStatement.

TINYINT, SMALLINT, INTEGER, BIGINT, NUMERIC and DECIMAL (without a

decimal point) are the supported integral types. They correspond

respectively to byte,

short, int,

long, BigDecimal and

BigDecimal Java types in the range of values that

they can represent (NUMERIC and DECIMAL are equivalent). The type

TINYINT is an HSQLDB extension to the SQL Standard, while the others

conform to the Standard definition. The SQL type dictates the maximum

and minimum values that can be held in a field of each type. For example

the value range for TINYINT is -128 to +127. The bit precision of

TINYINT, SMALLINT, INTEGER and BIGINT is respectively 8, 16, 32 and 64.

For NUMERIC and DECIMAL, decimal precision is used.

DECIMAL and NUMERIC with decimal fractions are mapped to

java.math.BigDecimal and can have very large

numbers of digits. In HyperSQL the two types are equivalent. These

types, together with integral types, are called exact numeric

types.

In HyperSQL, REAL, FLOAT and DOUBLE are equivalent: they are all

mapped to double in Java. These types are defined

by the SQL Standard as approximate numeric types. The bit-precision of

all these types is 64 bits.

The decimal precision and scale of NUMERIC and DECIMAL types can be optionally defined. For example, DECIMAL(10,2) means maximum total number of digits is 10 and there are always 2 digits after the decimal point, while DECIMAL(10) means 10 digits without a decimal point. The bit-precision of FLOAT can be defined but it is ignored and the default bit-precision of 64 is used. The default precision of NUMERIC and DECIMAL (when not defined) is 128.

Note: If a database has been set to ignore type precision limits with the SET DATABASE SQL SIZE FALSE command, then a type definition of DECIMAL with no precision and scale is treated as DECIMAL(128,32). In normal operation, it is treated as DECIMAL(128).

Integral Types

In expressions, values of TINYINT, SMALLINT, INTEGER, BIGINT, NUMERIC and DECIMAL (without a decimal point) types can be freely combined and no data narrowing takes place. The resulting value is of a type that can support all possible values.

If the SELECT statement refers to a simple column or function, then the return type is the type corresponding to the column or the return type of the function. For example:

CREATE TABLE t(a INTEGER, b BIGINT); SELECT MAX(a), MAX(b) FROM t;

will return a ResultSet where the type of

the first column is java.lang.Integer and the

second column is java.lang.Long. However,

SELECT MAX(a) + 1, MAX(b) + 1 FROM t;

will return java.lang.Long and

BigDecimal values, generated as a result of

uniform type promotion for all possible return values. Note that type

promotion to BigDecimal ensures the correct value

is returned if MAX(b) evaluates to

Long.MAX_VALUE.

There is no built-in limit on the size of intermediate integral

values in expressions. As a result, you should check for the type of the

ResultSet column and choose an appropriate

getXXXX() method to retrieve it. Alternatively,

you can use the getObject() method, then cast

the result to java.lang.Number and use the

intValue() or

longValue() if the value is not an instance of

java.math.BigDecimal.

When the result of an expression is stored in a column of a

database table, it has to fit in the target column, otherwise an error

is returned. For example, when 1234567890123456789012 /

12345687901234567890 is evaluated, the result can be stored in

any integral type column, even a TINYINT column, as it is a small

value.

In SQL Statements, an integer literal is treated as INTEGER, unless its value does not fit. In this case it is treated as BIGINT or DECIMAL, depending on the value.

Depending on the types of the operands, the result of the

operation is returned in a JDBC ResultSet in any

of the related Java types: Integer,

Long or BigDecimal. The

ResultSet.getXXXX() methods can be used to

retrieve the values so long as the returned value can be represented by

the resulting type. This type is deterministically based on the query,

not on the actual rows returned.

Other Numeric Types

In SQL statements, number literals with a decimal point are

treated as DECIMAL unless they are written with an exponent. Thus

0.2 is considered a DECIMAL value but

0.2E0 is considered a DOUBLE value.

When an approximate numeric type, REAL, FLOAT or DOUBLE (all

synonymous) is part of an expression involving different numeric types,

the type of the result is DOUBLE. DECIMAL values can be converted to

DOUBLE unless they are beyond the Double.MIN_VALUE -

Double.MAX_VALUE range. For example, A * B, A / B, A + B,

etc., will return a DOUBLE value if either A or B is a DOUBLE.

Otherwise, when no DOUBLE value exists, if a DECIMAL or NUMERIC value is part an expression, the type of the result is DECIMAL or NUMERIC. Similar to integral values, when the result of an expression is assigned to a table column, the value has to fit in the target column, otherwise an error is returned. This means a small, 4 digit value of DECIMAL type can be assigned to a column of SMALLINT or INTEGER, but a value with 15 digits cannot.

When a DECIMAL value is multiplied by a DECIMAL or integral type, the resulting scale is the sum of the scales of the two terms. When they are divided, the result is a value with a scale (number of digits to the right of the decimal point) equal to the larger of the scales of the two terms. The precision for both operations is calculated (usually increased) to allow all possible results.

The distinction between DOUBLE and DECIMAL is important when a

division takes place. For example, 10.0/8.0 (DECIMAL)

equals 1.2 but 10.0E0/8.0E0

(DOUBLE) equals 1.25. Without division operations,

DECIMAL values represent exact arithmetic.

REAL, FLOAT and DOUBLE values are all stored in the database as

java.lang.Double objects. Special values such as

NaN and +-Infinity are also stored and supported. These values can be

submitted to the database via JDBC

PreparedStatement methods and are returned in

ResultSet objects. In order to allow division by

zero of DOUBLE values in SQL statements (which returns NaN or

+-Infinity) you should set the property

hsqldb.double_nan as false (SET DATABASE SQL DOUBLE

NAN FALSE). The double values can be retrieved from a

ResultSet in the required type so long as they

can be represented. For setting the values, when

PreparedStatement.setDouble() or

setFloat() is used, the value is treated as a

DOUBLE automatically.

In short,

<numeric type> ::= <exact numeric type> |

<approximate numeric type>

<exact numeric type> ::= NUMERIC [ <left

paren> <precision> [ <comma> <scale> ] <right

paren> ] | { DECIMAL | DEC } [ <left paren> <precision> [

<comma> <scale> ] <right paren> ] | TINYINT | SMALLINT

| INTEGER | INT | BIGINT

<approximate numeric type> ::= FLOAT [ <left

paren> <precision> <right paren> ] | REAL | DOUBLE

PRECISION

<precision> ::= <unsigned

integer>

<scale> ::= <unsigned

integer>

The BOOLEAN type conforms to the SQL Standard and represents the

values TRUE, FALSE and

UNKNOWN. This type of column can be initialised with

Java boolean values, or with NULL for the

UNKNOWN value.

The three-value logic is sometimes misunderstood. For example, x IN (1, 2, NULL) does not return true if x is NULL.

In previous versions of HyperSQL, BIT was simply an alias for BOOLEAN. In version 2, BIT is a single-bit bit map.

<boolean type> ::= BOOLEAN

The SQL Standard does not support type conversion to BOOLEAN apart from character strings that consists of boolean literals. Because the BOOLEAN type is relatively new to the Standard, several database products used other types to represent boolean values. For improved compatibility, HyperSQL allows some type conversions to boolean.

Values of BIT and BIT VARYING types with length 1 can be converted to BOOLEAN. If the bit is set, the result of conversion is the TRUE value, otherwise it is FALSE.

Values of TINYINT, SMALLINT, INTEGER and BIGINT types can be converted to BOOLEAN. If the value is zero, the result is the FALSE value, otherwise it is TRUE.

The CHARACTER, CHARACTER VARYING and CLOB types are the SQL Standard character string types. CHAR, VARCHAR and CHARACTER LARGE OBJECT are synonyms for these types. HyperSQL also supports LONGVARCHAR as a synonym for VARCHAR. If LONGVARCHAR is used without a length, then a length of 16M is assigned. You can set LONGVARCHAR to map to CLOB, with the sql.longvar_is_lob connection property or the SET DATABASE SQL LONGVAR IS LOB TRUE statement..

HyperSQL's default character set is Unicode, therefore all possible character strings can be represented by these types.

The SQL Standard behaviour of the CHARACTER type is a remnant of legacy systems in which character strings are padded with spaces to fill a fixed width. These spaces are sometimes significant while in other cases they are silently discarded. It would be best to avoid the CHARACTER type altogether. With the rest of the types, the strings are not padded when assigned to columns or variables of the given type. The trailing spaces are still considered discardable for all character types. Therefore, if a string with trailing spaces is too long to assign to a column or variable of a given length, the spaces beyond the type length are discarded and the assignment succeeds (provided all the characters beyond the type length are spaces).

The VARCHAR and CLOB types have length limits, but the strings are not padded by the system. Note that if you use a large length for a VARCHAR or CLOB type, no extra space is used in the database. The space used for each stored item is proportional to its actual length.

If CHARACTER is used without specifying the length, the length

defaults to 1. For the CLOB type, the length limit can be defined in

units of kilobyte (K, 1024), megabyte (M, 1024 * 1024) or gigabyte (G,

1024 * 1024 * 1024), using the <multiplier>. If

CLOB is used without specifying the length, the length defaults to

1GB.

<character string type> ::= { CHARACTER | CHAR }

[ <left paren> <character length> <right paren> ] | {

CHARACTER VARYING | CHAR VARYING | VARCHAR } <left paren>

<character length> <right paren> | LONGVARCHAR [ <left

paren> <character length> <right paren> ] | <character

large object type>

<character large object type> ::= { CHARACTER

LARGE OBJECT | CHAR LARGE OBJECT | CLOB } [ <left paren>

<character large object length> <right paren>

]

<character length> ::= <unsigned integer>

[ <char length units> ]

<large object length> ::= <length> [

<multiplier> ] | <large object length

token>

<character large object length> ::= <large

object length> [ <char length units> ]

<large object length token> ::= <digit>...

<multiplier>

<multiplier> ::= K | M | G

<char length units> ::= CHARACTERS |

OCTETS

Each character type has a collation. This is either a default collation or stated explicitly with the COLLATE clause. Collations are discussed in the Schemas and Database Objects chapter.

CHAR(10) CHARACTER(10) VARCHAR(2) CHAR VARYING(2) CLOB(1000) CLOB(30K) CHARACTER LARGE OBJECT(1M) LONGVARCHAR

The BINARY, BINARY VARYING and BLOB types are the SQL Standard binary string types. VARBINARY and BINARY LARGE OBJECT are synonyms for BINARY VARYING and BLOB types. HyperSQL also supports LONGVARBINARY as a synonym for VARBINARY. You can set LONGVARBINARY to map to BLOB, with the sql.longvar_is_lob connection property or the SET DATABASE SQL LONGVAR IS LOB TRUE statement.

Binary string types are used in a similar way to character string types. There are several built-in functions that are overloaded to support character, binary and bit strings.

The BINARY type represents a fixed width-string. Each shorter string is padded with zeros to fill the fixed width. Similar to the CHARACTER type, the trailing zeros in the BINARY string are simply discarded in some operations. For the same reason, it is best to avoid this particular type and use VARBINARY instead.

When two binary values are compared, if one is of BINARY type, then zero padding is performed to extend the length of the shorter string to the longer one before comparison. No padding is performed with other binary types. If the bytes compare equal to the end of the shorter value, then the longer string is considered larger than the shorter string.

If BINARY is used without specifying the length, the length

defaults to 1. For the BLOB type, the length limit can be defined in

units of kilobyte (K, 1024), megabyte (M, 1024 * 1024) or gigabyte (G,

1024 * 1024 * 1024), using the <multiplier>. If

BLOB is used without specifying the length, the length defaults to

1GB.

The UUID type represents a UUID string. The type is similar to

BINARY(16) but with the extra enforcement that disallows assigning,

casting, or comparing with shorter or longer strings. Strings such as

'24ff1824-01e8-4dac-8eb3-3fee32ad2b9c' or

'24ff182401e84dac8eb33fee32ad2b9c' are allowed. When a value of the UUID

type is converted to a CHARACTER type, the hyphens are inserted in the

required positions. Java UUID objects can be used with

java.sql.PreparedStatement to insert values of

this type. The getObject() method of ResultSet

returns the Java object for UUID column data.

<binary string type> ::= BINARY [ <left

paren> <length> <right paren> ] | { BINARY VARYING |

VARBINARY } <left paren> <length> <right paren> |

LONGVARBINARY [ <left paren> <length> <right paren> ]

| UUID | <binary large object string type>

<binary large object string type> ::= { BINARY

LARGE OBJECT | BLOB } [ <left paren> <large object length>

<right paren> ]

<length> ::= <unsigned

integer>

BINARY(10) VARBINARY(2) BINARY VARYING(2) BLOB(1000) BLOB(30G) BINARY LARGE OBJECT(1M) LONGVARBINARY

The BIT and BIT VARYING types are the supported bit string types. These types were defined by SQL:1999 but were later removed from the Standard. Bit types represent bit maps of given lengths. Each bit is 0 or 1. The BIT type represents a fixed width-string. Each shorter string is padded with zeros to fill the fixed with. If BIT is used without specifying the length, the length defaults to 1. The BIT VARYING type has a maximum width and shorter strings are not padded.

Before the introduction of the BOOLEAN type to the SQL Standard, a single-bit string of the type BIT(1) was commonly used. For compatibility with other products that do not conform to, or extend, the SQL Standard, HyperSQL allows values of BIT and BIT VARYING types with length 1 to be converted to and from the BOOLEAN type. BOOLEAN TRUE is considered equal to B'1', BOOLEAN FALSE is considered equal to B'0'.

For the same reason, numeric values can be assigned to columns and variables of the type BIT(1). For assignment, the numeric value zero is converted to B'0', while all other values are converted to B'1'. For comparison, numeric values 1 is considered equal to B'1' and numeric value zero is considered equal to B'0'.

It is not allowed to perform other arithmetic or boolean operations involving BIT(1) and BIT VARYING(1). The kid of operations allowed on bit strings are analogous to those allowed on BINARY and CHARACTER strings. Several built-in functions support all three types of string.

<bit string type> ::= BIT [ <left paren>

<length> <right paren> ] | BIT VARYING <left paren>

<length> <right paren>

BIT BIT(10) BIT VARYING(2)

BLOB and CLOB are lob types. These types are used for very long strings that do not necessarily fit in memory. Small lobs that fit in memory can be accessed just like BINARY or VARCHAR column data. But lobs are usually much larger and therefore accessed with special JDBC methods.

To insert a lob into a table, or to update a column of lob type

with a new lob, you can use the

setBinaryStream() and

setCharacterStream() methods of JDBC

java.sql.PreparedStatement. These are very

efficient methods for long lobs. Other methods are also supported. If

the data for the BLOB or CLOB is already a memory object, you can use

the setBytes() or

setString() methods, which are efficient for

memory data. Another method is to obtain a lob with the

getBlob() and

getClob() methods of

java.sql.Connection, populate its data, then use

the setBlob() or

setClob() methods of

PreparedStatement. Yet another method allows to

create instances of org.hsqldb.jdbc.JDBCBlobFile

and org.hsqldb.jdbc.JDBCClobFile and construct a

large lob for use with setBlob() and

setClob() methods.

A lob is retrieved from a ResultSet with

the getBlob() or

getClob() method. The steaming methods of the

lob objects are then used to access the data. HyperSQL also allows

efficient access to chunks of lobs with

getBytes() or

getString() methods. Furthermore, parts of a

BLOB or CLOB already stored in a table can be modified. An updatable

ResultSet is used to select the row from the

table. The getBlob() or

getClob() methods of

ResultSet are used to access the lob as a

java.sql.Blob or

java.sql.Clob object. The

setBytes() and

setString() methods of these objects can be

used to modify the lob. Finally the updateRow()

method of the ResultSet is used to update the lob

in the row. Note these modifications are not allowed with compressed or

encrypted lobs.

Lobs are logically stored in columns of tables. Their physical

storage is a separate *.lobs file. This file is

created as soon as a BLOB or CLOB is inserted into the database. The

file will grow as new lobs are inserted into the database. In version 2,

the *.lobs file is never deleted even if all lobs

are deleted from the database. In this case you can delete the

*.lobs file after a SHUTDOWN. When a CHECKPOINT

happens, the space used for deleted lobs is freed and is reused for

future lobs. By default, clobs are stored without compression. You can

use a database setting to enable compression of clobs. This can

significantly reduce the storage size of clobs.

From version 2.3.4 there are two options for storing Java Objects.

The default option allows storing Serializable object. The objects remain serialized inside the database until they are retrieved. The application program that retrieves the object must include in its classpath the Java Class for the object, otherwise it cannot retrieve the object.

Any serializable Java Object can be inserted directly into a

column of type OTHER using any variation of

PreparedStatement.setObject() methods.

The alternative Live Object option is for

mem: databases only and is enabled when the

database property sql.live_object=true is appended

to the connection property that creates the mem database. For example

'jdbc:hsqldb:mem:mydb;sql.live_object=true'. With

this option, any Java object can be stored as it is not serialized. The

SQL statement SET DATABASE SQL LIVE OBJECT TRUE can

be also used. Note the SQL statement must be executed on the first

connection to the database before any data is inserted. No data access

should be made from this connection. Instead, new connections should be

used for data access.

For comparison purposes and in indexes, any two Java Objects are considered equal unless one of them is NULL. You cannot search for a specific object or perform a join on a column of type OTHER.

Java Objects can simply be stored internally and no operations can